|

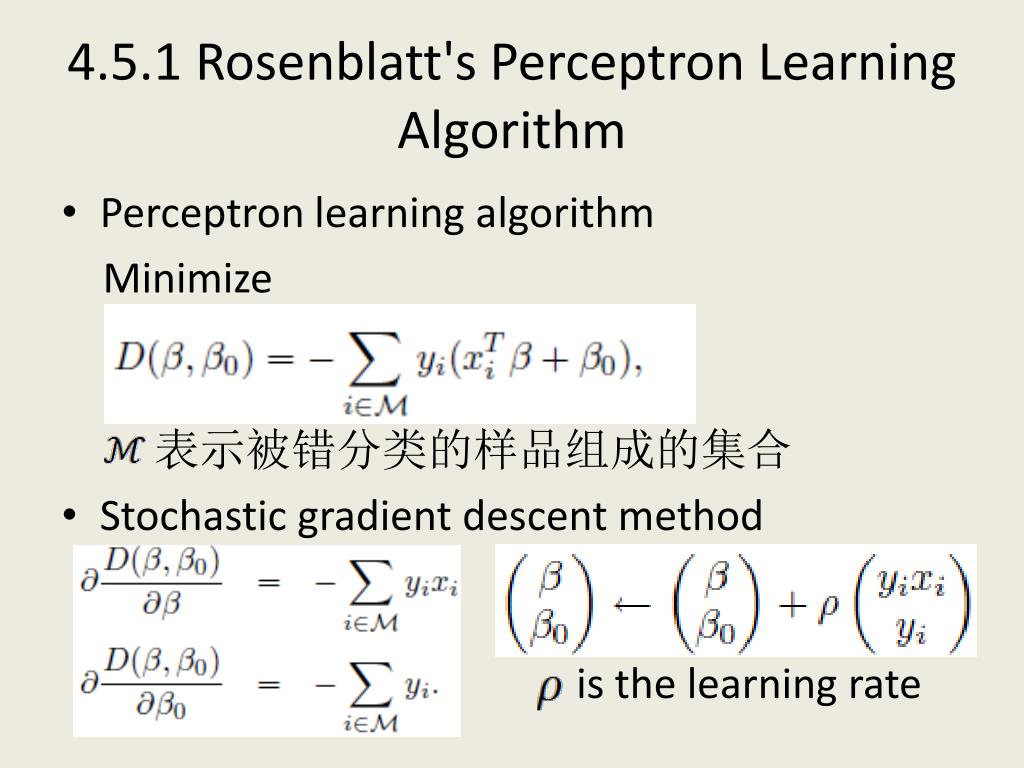

3/16/2023 0 Comments Perceptron algorithm hyperplan(If the data is not linearly separable, it will loop forever.) The argument goes as follows: Suppose w such that y i ( x w ) > 0 ( x i, y i) D. Second, we show that by applying to the Perceptron algorithm the simplest possible eviction policy, which discards a random support vector each time a new one comes in, we achieve a shifting bound close to. The difference between the original approach and the. First, we introduce and analyze a shifting Perceptron algorithm achieving the best known shifting bounds while using an unlimited budget.

neuron and shows convergence to the optimal separating hyperplane under certain.

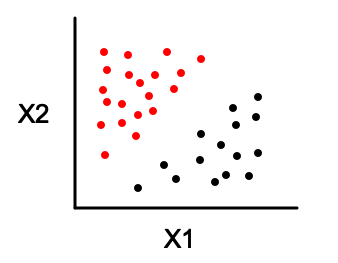

The weight vector is then corrected according to the preceding rule. In this paper we analyze a modification of the perceptron learning algorithm of Rosenblatt (Rosenblatt, 1962) with the -margin. based on modifications of the perceptron algorithm that extend the. (3.9) is defined at all points.The algorithm is initialized from an arbitrary weight vector w(0), and the correction vector xY x x is formed using the misclassified features. Quiz: Given the theorem above, what can you say about the margin of a classifier (what is more desirable, a large margin or a small margin?) Can you characterize data sets for which the Perceptron algorithm will converge quickly? Draw an example. If a data set is linearly separable, the Perceptron will find a separating hyperplane in a finite number of updates. The algorithm is known as the perceptron algorithm and is quite simple in its structure.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed